Previous articles have noted the advances on cancer surgery in the case of melanoma thanks to Augmented Reality, and we made it very clear how aggressive and how high the mortality for this type of cancer is once it gets to metastasize (18%, 5-year survival rate). The last time we analyzed this topic, it was related to lower extremity cases, where let’s face it: anatomy is not as complicated but, one of the main complications of melanoma is the high rate of metastases to the head and neck area, where Central Nervous System metastases occur in 10-40%, and as you can probably guess, once this happens the survival chances for melanoma patients decreases dramatically.

However, there are ways to learn about the characteristics of the suspected cancer and the chances that this cancer has already metastasized with one simple procedure, an Intraoperative Sentinel Node (SN) identification and biopsy. This is not as easy as in the lower extremity because anatomy, venous and lymphatic drainage are variable, and it represents a challenge for the surgeon that performs this procedure. Because of this, researchers from the Leiden University Medical Hospital in the Netherlands have decided to propose an Augmented Reality system that seems different to what have been made so far.

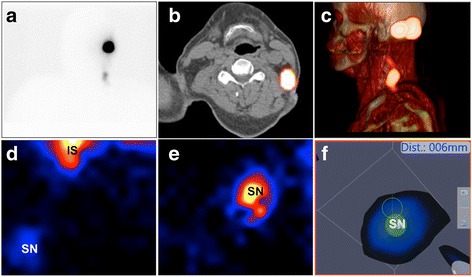

Most Augmented Reality systems play it safe: they go and make their 3D models based on traditional imagenology, and one way or another, they superimpose them on top of the operative field. This technique has brought amazing results in many medical disciplines but, the system proposed by this team of researchers uses a gamma camera-based freehandSPECT along with the hybrid tracer Indocyanine Green-99mTc-nanocolloid. What does this mean? The Leiden University Medical Hospital is ditching the preoperative images altogether, making the awaited transition to intraoperative images in real time.

Eight melanoma patients in the head and neck area were included in the study with Indocyanine green (ICG)-99mTc-nanocolloid injected preoperatively and FreehandSPECT was used during surgery through a portable gamma camera (with previous placement of reference trackers), those scans were projected in an operative monitor using Augmented Reality to determine the position of the SN. Due to ethical considerations, intraoperative fluoroscopy was used to confirm the location of the SN, comparing it with the information obtained with the system in the process.

With the freehandSPECT system, 14 out of 15 SNs were identified, and navigation towards these nodes was successful in 13 of them. Only one SN was identified with traditional fluoroscopy because of its localization and because it was clustered, and it wasn’t possible to find it with navigational means alone. In general, the system is doing a great job – no technique is 100% effective, but this one is proving itself to be a great tool for the future.

We are really happy about the freehandSPECT AR technique for SN intraoperative detection in the head and neck area, sure it does have limitations. We can only wonder, what if it was used with SPECT images obtained from a CT Scan? What if the information obtained with the portable system was complemented with this? Can we calculate a precision percentage for it? Sure, this increases the preparation time for surgery (although CT Scan were done for these patients too), but no one will attempt head and neck surgery without previous preparation.

What did you think about this system? Let us know your opinions in the comments section.